Server Buying Decisions: Memory

by Johan De Gelas on December 19, 2013 10:00 AM EST- Posted in

- Enterprise

- Memory

- IT Computing

- Cloud Computing

- server

Measuring Stream Throughput

Before we start with the real world tests, it is good to perform a few low level benchmarks. First, we measured the bandwidth in Linux. The binary was compiled with the Open64 compiler 5.0 (Opencc). It is a multi-threaded, OpenMP based, 64-bit binary. The following compiler switches were used:

-Ofast -mp -ipa

The results are expressed in GB per second. Note that we also tested with gcc 4.8.1 and compiler options

-O3 –fopenmp –static

Results were consistently 20 to 30% lower with gcc. So we feel our choice for Open64 is appropriate: everybody can reproduce our results (Open64 is freely available) and as the binary is capable of reaching higher speeds, it is easier to spot speed differences between DIMMs. We equipped the HP with a Sandy Bridge EP Xeon (Xeon 2690) and an Ivy Bridge EP Xeon (Xeon 2680v2). Note that the Stream benchmark is not limited by the CPUs at all. All tests were done with 32 or 40 threads.

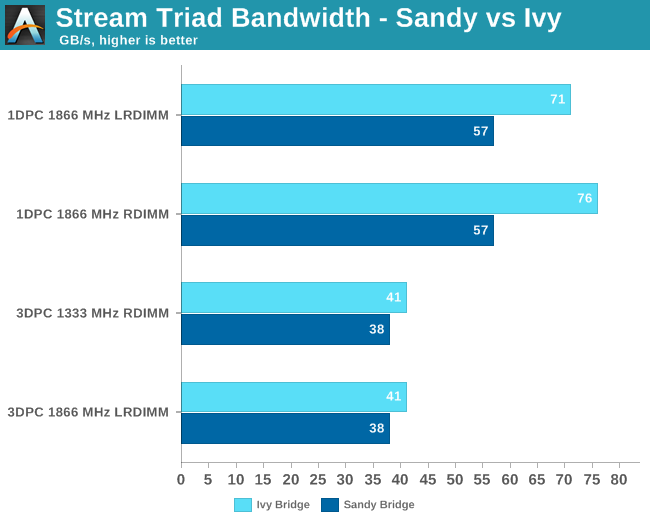

The extra buffering inside the LR-DIMMs has very little impact on the effective bandwidth. RDIMMs deliver only 3% more bandwidth at 1866 MHz, 1DPC. This bandwidth gap is 0 when we run the same test on our "Sandy Bridge EP" Xeon.

At 3DPC, there is no bandwidth gap at all. Both DIMMs are running at the same speed. Also note that the newer Xeon outperforms the older one by 8 to 33% in this test.

27 Comments

View All Comments

slideruler - Thursday, December 19, 2013 - link

Am I the only one who's concern with DDR4 in our future?Given that it's one-to-one we'll lose the ability to stuff our motherboards with cheap sticks to get to "reasonable" (>=128gig) amount of RAM... :(

just4U - Thursday, December 19, 2013 - link

You really shouldn't need more than 640kb.... :Djust4U - Thursday, December 19, 2013 - link

seriously though .. DDR3 prices have been going up. as near as I can tell their approximately 2.3X the cost of what they once were. Memory makers are doing the semi-happy dance these days and likely looking forward to the 5x pricing schemes of yesteryear.MrSpadge - Friday, December 20, 2013 - link

They have to come up with something better than "1 DIMM per channel using the same amount of memory controllers" for servers.theUsualBlah - Thursday, December 19, 2013 - link

the -Ofast flag for Open64 will relax ansi and ieee rules for calculations, whereas the GCC flags won't do that.maybe thats the reason Open64 is faster.

JohanAnandtech - Friday, December 20, 2013 - link

Interesting comment. I ran with gcc, Opencc with O2, O3 and Ofast. If the gcc binary is 100%, I get 110% with Opencc (-O2), 130% (-O3) and the same with Ofast.theUsualBlah - Friday, December 20, 2013 - link

hmm, thats very interesting.i am guessing Open64 might be producing better code (atleast) when it comes to memory operations. i gave up on Open64 a while back and maybe i should try it out again.

thanks!

GarethMojo - Friday, December 20, 2013 - link

The article is interesting, but alone it doesn't justify the expense for high-capacity LRDIMMs in a server. As server professionals, our goal is usually to maximise performance / cost for a specific role. In this example, I can't imagine that better performance (at a dramatically lower cost) would not be obtained by upgrading the storage pool instead. I'd love to see a comparison of increasing memory sizes vs adding more SSD caching, or combinations thereof.JlHADJOE - Friday, December 20, 2013 - link

Depends on the size of your data set as well, I'd guess, and whether or not you can fit the entire thing in memory.If you can, and considering RAM is still orders of magnitude faster than SSDs I imagine memory still wins out in terms of overall performance. Too large to fit in a reasonable amount of RAM and yes, SSD caching would possibly be more cost effective.

MrSpadge - Friday, December 20, 2013 - link

One could argue that the storage optimization would be done for both memory configurations.